Home

Home

How AI Drawing Generators Turn Simple Text Into Professional Art

AI drawing generators have evolved from simple experiments in neural networks into powerful creative partners that can produce gallery-quality visuals in seconds. These tools allow anyone to bridge the gap between imagination and execution, using nothing more than descriptive language. By leveraging complex algorithms, an AI drawing generator interprets words to simulate styles, textures, and lighting that previously required years of technical training to master.

The current landscape of digital creativity is shifting. Whether it is a marketing team needing a unique hero image for a landing page, a game designer conceptualizing a new world, or a hobbyist exploring digital painting, these generators offer a speed and flexibility that traditional software cannot match.

Understanding the Technology Behind AI Drawing Generators

The magic of modern AI drawing generators lies in a process called Diffusion. Unlike early AI models that tried to "glue" parts of existing images together, diffusion models represent a more sophisticated approach to visual creation.

The Role of Diffusion Models

Most top-tier AI drawing generators, such as Midjourney, Stable Diffusion, and FLUX, are built on diffusion architecture. During the training phase, the model is exposed to billions of images paired with text descriptions. It learns to recognize what a "cat," "cyberpunk city," or "watercolor texture" looks like.

Crucially, it also learns the concept of "noise." Imagine taking a crystal-clear photograph and slowly adding static until it becomes a blur of gray pixels. The AI learns how to reverse this process. When you give a prompt to an AI drawing generator, it starts with a canvas of random noise and gradually "denoises" it, shaping the pixels into a coherent image that aligns with your text description. It is less like searching a database and more like a sculptor finding a statue inside a block of marble.

From Textual Latent Space to Visual Pixels

When a prompt is entered, the AI utilizes Natural Language Processing (NLP) to convert those words into mathematical vectors. These vectors exist in a "latent space"—a multi-dimensional map where similar concepts are grouped together. For instance, the word "dog" is mathematically closer to "puppy" than to "airplane."

The generator navigates this map to find the intersection of all your prompt’s keywords. If you ask for a "noir detective dog," the AI finds the point in its training where "noir aesthetics," "detective clothing," and "canine anatomy" overlap. It then uses this mathematical coordinate to guide the denoising process, ensuring the final image reflects the intent of the creator.

Essential Features for Creative Professionals

To use an AI drawing generator effectively in a professional capacity, one must understand the core functionalities that extend beyond simple text-to-image generation.

Text to Image and Image to Image Workflows

The primary function of any AI drawing generator is text-to-image (T2I). However, the professional workflow often begins with image-to-image (I2I). In this mode, the user provides a reference image—perhaps a rough sketch or a photograph with the desired composition—and the AI uses it as a structural guide. This is invaluable for designers who have a specific layout in mind but want the AI to handle the rendering, lighting, and texture.

Precision Control with Inpainting and Outpainting

One of the most significant breakthroughs in AI art is the ability to edit specific regions of an image.

- Inpainting: This allows you to highlight a specific area of a generated image and "prompt" the AI to change only that part. For example, if you have a perfect landscape but don't like the tree in the foreground, you can mask the tree and ask the AI to replace it with a "vintage lamp post."

- Outpainting (Generative Expand): This feature extends the boundaries of an image. If you have a portrait with a tight crop, outpainting can "imagine" what the rest of the room looks like, maintaining the same lighting, shadows, and artistic style to create a wide-angle view.

Style Reference and Character Consistency

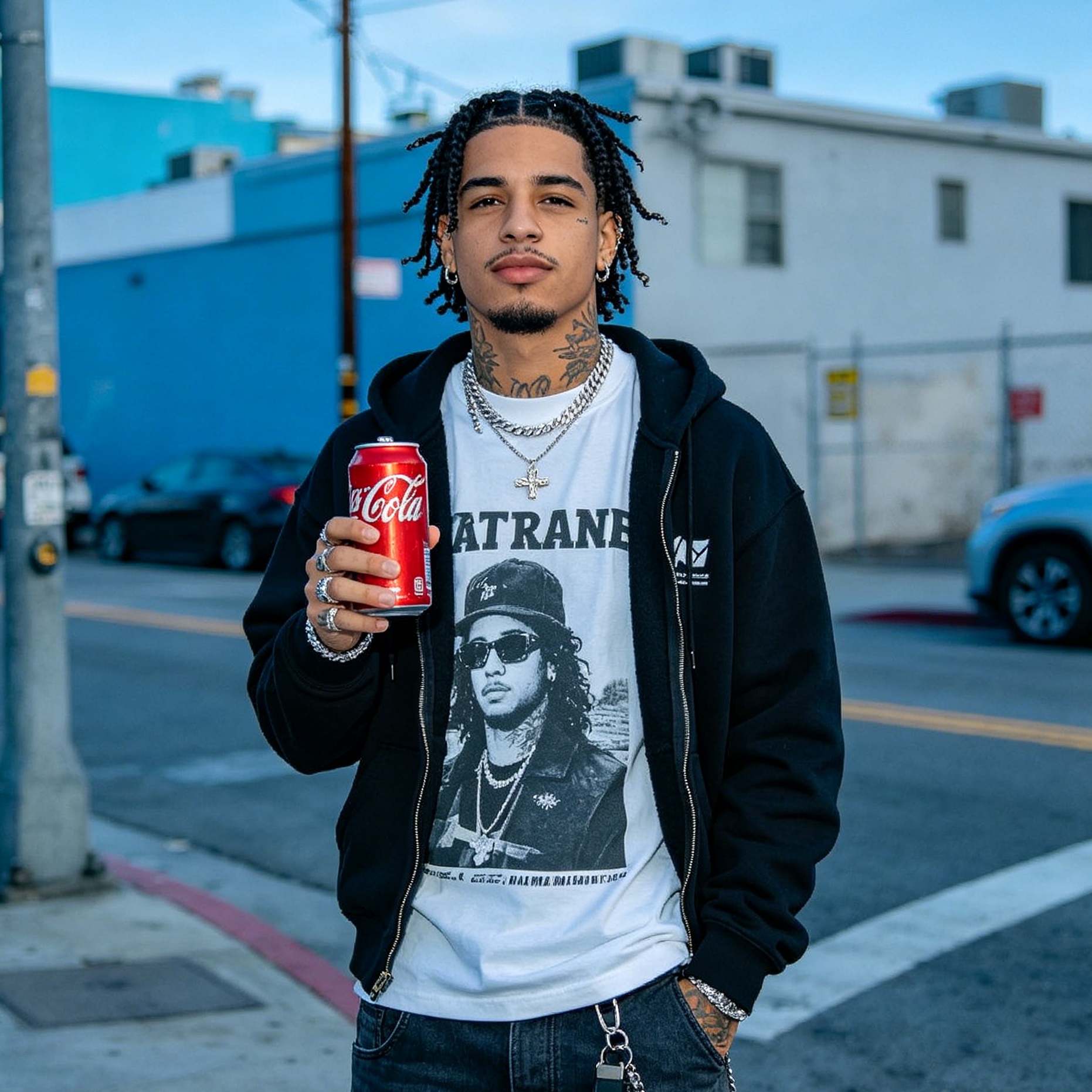

A major challenge in AI generation has been maintaining the same character or style across multiple images. Modern generators now include "Style Reference" (Sref) and "Character Reference" (Cref) parameters. By providing a source image, you can tell the AI to "apply the exact color palette and brushwork of this image to a new prompt," or "ensure the person in this new scene has the exact facial features of the person in my previous generation." This is a game-changer for storyboarding and brand consistency.

Comparing the Best AI Drawing Generators in 2025

Choosing the right AI drawing generator depends on the specific requirements of the project. In my experience testing these models for various commercial projects, each has a distinct "personality" and technical strength.

Midjourney for High-End Artistic Aesthetics

Midjourney remains the gold standard for artistic flair. It doesn't just follow instructions; it interprets them with a certain cinematic sensibility. It excels at complex lighting, atmosphere, and "vibe."

- Best for: Editorial illustrations, concept art, and high-concept photography.

- Pro Tip: Use the

--v 6.1or--v 7models for the highest level of detail. Midjourney thrives on stylistic descriptors like "Kodachrome," "double exposure," or "brutalist architecture."

DALL-E 3 and ChatGPT for Intuitive Prompting

DALL-E 3, integrated into ChatGPT, is perhaps the most "intelligent" model. It has an incredible grasp of complex, multi-layered instructions. If you ask for "a red ball on a blue box next to a yellow pyramid under a green spotlight," it will get the spatial relationships right more often than its competitors.

- Best for: Beginners, storyboarding, and users who want to talk to the AI like a human collaborator.

- User Experience: Because it is conversational, you can simply say "make the sky darker" or "add more people," and it understands the context of the previous image.

Adobe Firefly for Commercial and Design Integration

Adobe Firefly is built differently. It is trained on Adobe Stock images and public domain content, making it "commercially safe." This means businesses can use the generated output without the copyright risks associated with other models.

- Best for: Corporate design, marketing assets, and Photoshop users.

- Integration: Its deepest strength is its integration into the Creative Cloud. Using "Generative Fill" directly inside Photoshop allows for a seamless workflow between AI generation and manual pixel editing.

FLUX for Photorealism and Human Anatomy

FLUX is the newcomer that has taken the industry by storm. It has largely solved the "AI hands" problem—the tendency for AI to generate six fingers or mangled limbs. It is also exceptionally good at rendering legible text within images, something that used to be a hallmark of "AI failure."

- Best for: Photorealistic portraits, product mockups with text, and high-detail realism.

- Performance: It requires significant VRAM if run locally, but cloud-based versions are incredibly fast and precise.

Stable Diffusion for Open Source Mastery

Stable Diffusion (specifically SDXL and the newer SD 3.5) is for the "power user." It is open-source, meaning you can run it on your own hardware without a subscription. More importantly, it allows for the use of "ControlNets"—external models that give you surgical control over poses, depth maps, and edges.

- Best for: Developers, tech-savvy artists, and those who need absolute privacy and control.

- Customization: You can train your own "LoRAs" (Low-Rank Adaptations) to teach the AI a specific person’s face or a very niche artistic style.

Master the Art of Prompt Engineering

To get the most out of an AI drawing generator, you must treat your prompt as a technical specification rather than a casual suggestion. In my time refining workflows for creative agencies, I’ve found that a structured prompt is the difference between a "cool" image and a "usable" one.

Structuring Your Creative Commands

A professional prompt should follow a clear hierarchy:

- Subject: What is the main focus? (e.g., "A futuristic cyberpunk samurai").

- Action/Context: What is happening? (e.g., "leaning against a neon-lit ramen stall in the rain").

- Style/Medium: What does it look like? (e.g., "Cinematic street photography, 35mm lens, grainy film texture").

- Lighting/Color: How is it lit? (e.g., "High-contrast chiaroscuro, cyan and magenta neon highlights").

- Technical Parameters: Aspect ratio, quality, or model version (e.g.,

--ar 16:9 --stylize 250).

Instead of saying "a beach at sunset," try: "Empty tropical beach at golden hour, long exposure photography showing the motion of the waves, ultra-high resolution, vibrant orange and purple sky, 8k, shot on Fujifilm."

The Power of Negative Prompts

Sometimes, telling the AI what not to do is more important than telling it what to do. Negative prompts act as a filter. Common negative terms include "blurry," "deformed limbs," "text," "watermark," or "low resolution." In tools like Stable Diffusion and FLUX, negative prompting is essential to clean up the output and ensure the AI doesn't default to common "low-quality" artifacts.

Practical Applications Across Different Industries

AI drawing generators are no longer just for making "cool pictures." They are becoming integrated into professional pipelines across various sectors.

Marketing and Social Media

Marketing teams use these tools to generate hundreds of variations for A/B testing in hours. Instead of hiring a photographer for a specialized shoot, they can generate high-end product lifestyle shots by using "Image to Image" to place their product in various AI-generated environments. This drastically reduces the "cost per creative" while maintaining high visual standards.

Architecture and Interior Design

Architects use AI drawing generators for rapid ideation. By feeding a basic floor plan into the generator, they can visualize dozens of different facade materials, lighting setups, and landscaping options. It allows clients to "feel" the space before a single brick is laid. In interior design, users can take a photo of an empty room and use AI to "stage" it with different furniture styles (Mid-century modern, Industrial, Scandi) in seconds.

Game Development and Concept Art

Concept artists use AI as a "digital mood board." They might generate 50 different versions of a "forest spirit" to find a specific silhouette or color scheme that sparks an idea. This doesn't replace the artist but speeds up the "blue-sky" phase of development, allowing the human artist to focus on the final, refined character design.

Education and Visual Aids

Teachers are using AI drawing generators to create custom illustrations for complex concepts. Whether it’s a visualization of "the molecular structure of a diamond" or a "historical reconstruction of ancient Rome," these tools make learning more visual and engaging for students.

The Future of AI Drawing Generators

We are moving toward a world where the boundary between 2D images, 3D models, and video is blurring. The next generation of AI drawing generators will likely allow for real-time interaction, where you can move a light source in a 3D-like latent space or change the camera angle after the image has been "drawn."

As these tools become more accessible, the value will shift away from the ability to create an image and toward the ability to curate and direct a vision. The "prompt" will evolve into a sophisticated dialogue between human intent and machine execution.

Conclusion

An AI drawing generator is more than just a novelty; it is a fundamental shift in the democratization of creativity. By understanding the underlying diffusion technology, choosing the right tool for the job—whether it’s Midjourney for art or Adobe Firefly for business—and mastering the nuances of prompt engineering, you can transform simple text into high-impact visual assets. As the technology continues to mature, the only limit to what can be created is the specificity of the words used to describe it.

FAQ

What is the difference between an AI drawing generator and an AI art generator?

While the terms are often used interchangeably, an AI drawing generator often refers to tools focused on specific "hand-drawn" styles like sketches, ink renderings, or line art. An AI art generator is a broader category that includes photorealistic images, oil paintings, and 3D renders. Most modern tools like Adobe Firefly or Midjourney can function as both.

Can I use AI-generated drawings for commercial purposes?

It depends on the tool. Adobe Firefly is specifically designed for commercial safety. Midjourney and ChatGPT (DALL-E 3) allow commercial use for paid subscribers. However, copyright laws regarding AI-generated content are still evolving, and in many jurisdictions, AI-only creations cannot be copyrighted by humans.

Why do AI drawing generators struggle with text and fingers?

This is due to the way diffusion models learn. They understand the "concept" of a hand (flesh-colored with appendages) but don't necessarily understand the anatomical "logic" that there must be exactly five fingers. Newer models like FLUX and DALL-E 3 have significantly improved this by using larger training datasets and better text-to-visual alignment.

Is there a free AI drawing generator?

Yes, tools like Craiyon offer free generation with some limitations on speed and quality. Stable Diffusion is also free if you have the hardware to run it locally. Many paid services like Midjourney or Adobe Firefly offer limited free trials or "credits" to new users.

How do I make my AI drawings look more realistic?

To achieve realism, focus on photographic technical terms in your prompt. Use keywords like "depth of field," "ISO 100," "f/1.8," "natural lighting," and "high-resolution skin texture." Avoid using generic words like "photorealistic" and instead describe the specific elements that make a photo look real.

-

Topic: Generate Drawings – Free Online Drawing Generator - Adobe Fireflyhttps://www.adobe.com/ae_en/products/firefly/features/ai-drawing-generator.html

-

Topic: Craiyon - Your FREE AI image generator tool: Create AI art!https://www.craiyon.com/#:~:text=Previously

-

Topic: What is an AI Drawing Generator? A Beginner's Guidehttps://pixele.studio/blog/what-is-an-ai-drawing-generator/