Home

Home

Finding the Best AI Photo Generator to Match Your Creative Style

The landscape of digital imagery has undergone a seismic shift with the advent of latent diffusion models and generative adversarial networks. An AI photo generator is no longer just a novelty for creating surreal art; it has become a fundamental tool in the arsenal of photographers, graphic designers, and marketers. These systems translate complex natural language descriptions into high-fidelity visual assets, effectively bridging the gap between human imagination and digital execution.

Choosing the right platform requires an understanding of the underlying technology, the specific aesthetic strengths of different models, and the practicalities of prompt engineering. Whether the goal is to produce photorealistic architecture renders, stylized concept art, or commercially safe marketing materials, selecting the appropriate AI photo generator is the first step toward professional-grade output.

Understanding the Technology Behind AI Image Synthesis

To use these tools effectively, it is essential to comprehend how they transform text into pixels. Most modern AI photo generators, including industry leaders like Midjourney and Stable Diffusion, utilize a process known as Diffusion.

The Diffusion Process Explained

Diffusion models work by learning to reverse a process of gradual degradation. During the training phase, an AI is shown billions of images. These images are systematically obscured by adding "Gaussian noise" until they become unrecognizable static. The model learns exactly how that noise was added, which enables it to reverse the process.

When a user enters a prompt into an AI photo generator, the system starts with a canvas of random noise. Guided by the text embeddings—the mathematical representation of the words—the model iteratively "denoises" the canvas. It identifies patterns that correspond to the prompt, slowly refining the static into a coherent, high-resolution image. This iterative refinement is why AI-generated photos often possess a level of detail and texture that surpasses older generative methods.

Generative Adversarial Networks (GANs)

While Diffusion is currently the dominant architecture, Generative Adversarial Networks (GANs) laid the groundwork for this field and are still utilized for specific high-speed applications. A GAN consists of two neural networks: a generator that creates images and a discriminator that evaluates them against real data. They are locked in a continuous loop where the generator tries to "fool" the discriminator into thinking its creations are real. This competition drives the system toward higher realism, making GANs particularly effective for localized edits and real-time filters.

Top AI Photo Generators Dominating the Current Landscape

The market is saturated with various tools, but a few have established themselves as benchmarks for quality, speed, and versatility. Each caters to a slightly different demographic and use case.

Midjourney: The Aesthetic Powerhouse

Midjourney is widely regarded as the most "artistic" AI photo generator available. Unlike models that aim for clinical accuracy, Midjourney’s algorithms are tuned to produce images with high aesthetic value, dramatic lighting, and rich textures.

- Platform Mechanics: Currently, Midjourney operates primarily through Discord and a dedicated web alpha. Its community-centric approach allows users to see others' creations, fostering a collaborative environment for learning.

- Best Use Cases: High-end concept art, cinematic photography, and stylized illustrations.

- Specific Strengths: The model excels at "lighting" and "composition." For instance, using parameters like

--v 6.1and--ar 16:9allows for cinematic wide-screen shots that look like they were captured on a professional film set. It handles atmospheric effects like fog, lens flare, and bokeh with remarkable sophistication.

DALL-E 3 by OpenAI: Precision and Logic

Integrated into ChatGPT, DALL-E 3 is the most accessible and logically sound AI photo generator. Its primary strength lies in its "prompt adherence"—the ability to follow complex, multi-layered instructions without omitting details.

- Interaction Model: Users can speak to the AI in natural language. If an image isn't quite right, the user can simply say, "Make the sun brighter" or "Add a black cat to the windowsill," and the model understands the context of the previous generation.

- Best Use Cases: Complex scenes with specific spatial requirements, images requiring legible text, and educational diagrams.

- Technical Edge: DALL-E 3 is exceptional at rendering text within images, a task where many other diffusion models fail. If a prompt requires a "storefront with a sign saying 'The Daily Brew'," DALL-E 3 is significantly more likely to spell the words correctly.

Adobe Firefly: The Commercial Standard

For professionals concerned about legal safety and workflow integration, Adobe Firefly is the preferred choice. It is trained exclusively on Adobe Stock images and public domain content, ensuring that the output is "commercially safe" and does not infringe on the intellectual property of living artists.

- Integration: Firefly is built directly into Photoshop through the "Generative Fill" and "Generative Expand" features. This allows designers to extend a landscape or add objects to an existing photo in seconds.

- Best Use Cases: Corporate marketing, editorial design, and rapid prototyping within a professional design pipeline.

- Ethical Footprint: Adobe provides Content Credentials, a "nutrition label" for digital content that tracks if AI was used in the creation process, promoting transparency in journalism and advertising.

Stable Diffusion: The Open Source Frontier

Stable Diffusion represents the "power user" end of the spectrum. Because it is open-source, it can be run locally on high-end hardware, giving the user total control over every aspect of the generation process.

- Customization: Users can utilize LoRAs (Low-Rank Adaptation) and ControlNets to dictate the exact pose of a character or the specific architecture of a building.

- Best Use Cases: High-level technical projects, private generations without cloud restrictions, and developers building their own AI applications.

- Learning Curve: Stable Diffusion has a steep learning curve compared to DALL-E 3, requiring knowledge of sampling steps, CFG scales, and seed management.

Mastering Prompt Engineering for Photorealistic Results

The quality of an AI-generated photo is directly proportional to the quality of the input. Successful prompt engineering requires a blend of descriptive language and technical photography terminology. Based on extensive testing across multiple platforms, a structured approach to prompting consistently yields better results.

The Structure of a High-Value Prompt

To get the most out of an AI photo generator, think of the prompt as a set of instructions for a photographer on a set. A comprehensive prompt should include:

- The Subject: Be specific. Instead of "a dog," use "a weathered Golden Retriever with grey fur around its muzzle."

- The Action/State: What is the subject doing? "Leaping through a field of tall grass."

- The Environment: Where is this taking place? "A sun-drenched meadow in the Scottish Highlands during autumn."

- Lighting and Atmosphere: This is the most critical element for realism. "Golden hour lighting with long shadows and soft, hazy atmosphere."

- Technical Camera Settings: Using camera terms forces the AI to mimic specific lens behaviors. Adding "Shot on 35mm f/1.8 lens," "Shallow depth of field," or "8k resolution, photorealistic" tells the model to focus on texture and blur.

Advanced Prompting Techniques

- Negative Prompting: In tools like Stable Diffusion and Midjourney (via the

--noparameter), you can specify what you don't want. For example,--no blurry, distorted hands, low resolutioncan significantly improve the clarity of the output. - Weighting: Some platforms allow users to assign numerical weights to certain words. If you want more focus on the "red flowers" than the "blue sky," you might use

red flowers::2 blue sky::1in Midjourney. - Style References: Rather than describing a style for paragraphs, you can use the

--srefparameter in Midjourney to upload an image and tell the AI to match its color palette and mood.

Specialized Workflows for Professional Creative Teams

In 2025 and 2026, the utility of AI photo generators has expanded from single image generation to full creative workflows. Tools like Glima AI and PhotoDirector have integrated these generative capabilities into comprehensive suites.

Integrated Content Suites

Platforms like Glima AI have moved beyond text-to-image to offer "no-code creative studios." This allows a marketing team to generate a product shot and then immediately use a "Magic Eraser" to remove a blemish or a "Background Remover" to prep the asset for e-commerce. The ability to generate static images and then convert them into cinematic video clips using a single interface represents the next evolution of AI creativity.

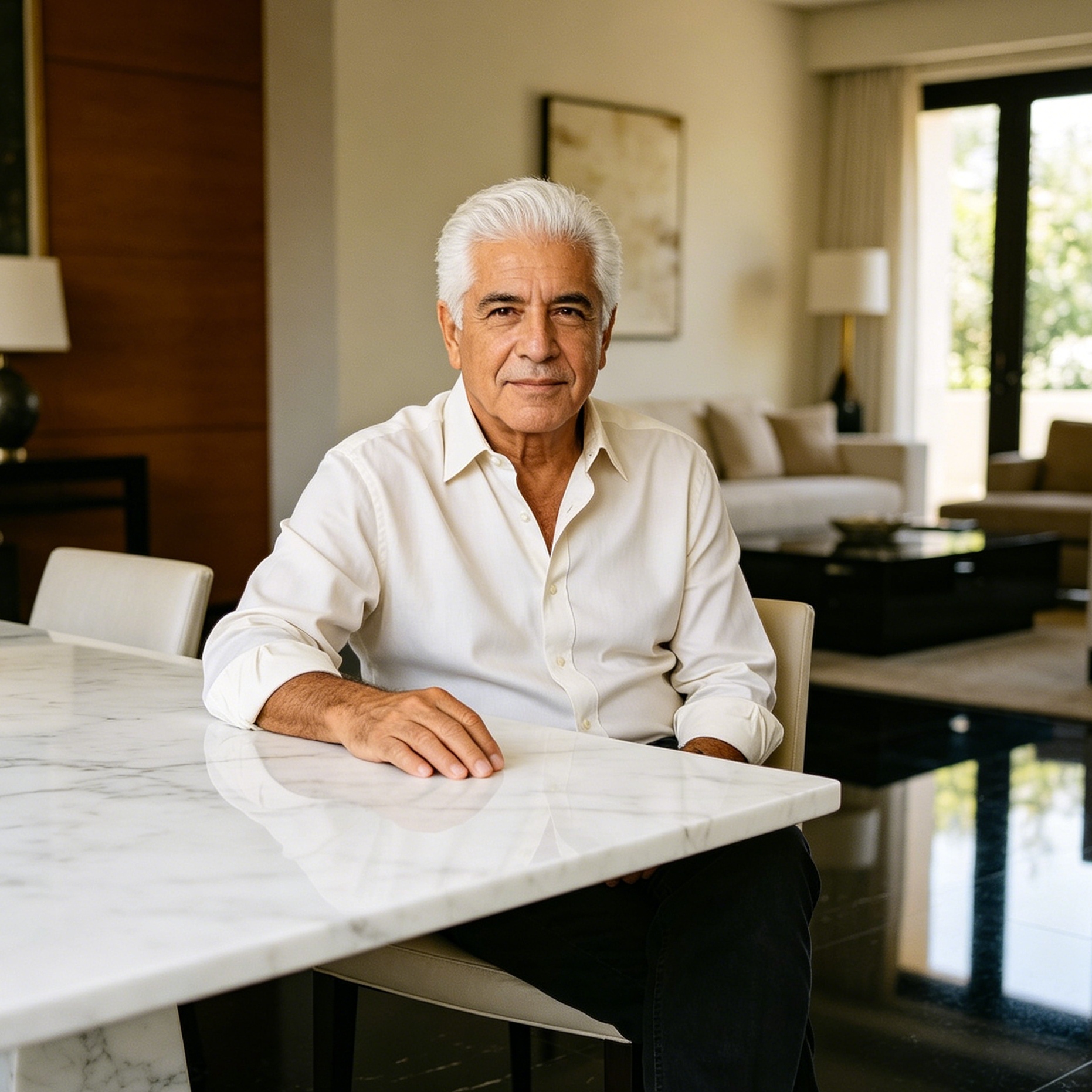

Enhancing Realism in Professional Headshots

AI is also being used to solve practical problems like the need for professional headshots without a studio session. By training a model on a dozen casual photos of a person, AI photo generators can now place that individual in a professional suit, in a well-lit office, with 100% anatomical accuracy. This "virtual photoshoot" capability is saving corporate entities thousands in photography costs annually.

Overcoming Common AI Generation Challenges

Despite the rapid progress, AI photo generators still face several technical hurdles. Recognizing these limitations is the key to managing expectations and refining the output.

The "Hand" Challenge

Rendering human hands remains one of the most difficult tasks for AI. Because hands are highly articulated and can appear in thousands of different orientations, models often struggle with the correct number of fingers or joint placements.

- The Solution: Use prompts that describe the hands doing something specific, like "holding a ceramic mug with both hands," which provides the AI with more structural context. Alternatively, use AI inpainting tools to "redraw" only the hand portion of an otherwise perfect image.

Consistency in Multi-Image Projects

Maintaining the same character or environment across multiple generations is a known pain point.

- The Solution: Use the "Seed" number. If you find an image you like, copy its seed and use it in subsequent prompts to maintain a similar visual "DNA." Midjourney’s "Character Reference" (

--cref) is also a powerful tool designed specifically for this purpose.

Logic and Spatial Awareness

Sometimes an AI will place a moon in front of a cloud or give a horse six legs. This happens because the AI understands the appearance of things but not the physics of them.

- The Solution: Simplify the prompt or use a "Region Editor" to fix specific logical errors. Breaking a complex scene into smaller, simpler prompts and then compositing them in a tool like Photoshop is often the most professional approach.

Ethical Considerations and the Future of AI Photography

As AI photo generators become more indistinguishable from reality, the ethical landscape grows more complex. Transparency and bias are the two primary concerns for the creative community.

Transparency and Watermarking

The rise of "Deepfakes" and misinformation has led to a push for digital watermarking. Most reputable AI photo generators now embed metadata (like C2PA standards) that identifies the image as AI-generated. For creators, it is a best practice to disclose the use of AI, especially in journalism or social media contexts where authenticity is expected.

Addressing Algorithmic Bias

AI models are trained on internet data, which often contains societal biases. If you ask for "a doctor," the AI might disproportionately generate images of a specific gender or ethnicity.

- Proactive Prompting: Users can counteract this by being explicit about diversity in their prompts. Using phrases like "a diverse group of professionals" or specifying diverse backgrounds helps ensure that AI-generated media is inclusive.

The Intellectual Property Debate

The legal status of AI-generated art is still evolving. Currently, in many jurisdictions, AI-generated images cannot be copyrighted because they lack a "human author." However, the prompt and the human curation are increasingly being recognized as creative acts. For commercial use, always check the Terms of Service of your chosen tool—Adobe Firefly and Midjourney Pro plans generally grant commercial usage rights, but free tiers often do not.

Looking Ahead: The Evolution of the AI Photo Generator

As we move toward 2026, the distinction between "AI photo generator" and "image editor" is blurring. We are entering an era of "Multimodal Creativity," where the AI doesn't just generate an image but understands the layers, lighting, and 3D geometry of the scene it has created.

Future models will likely offer:

- Real-Time Collaborative Generation: Multiple users working on a single canvas in a cloud-based environment.

- Total Spatial Control: The ability to move a character within a 2D image as if it were a 3D model.

- Haptic/Sensory Integration: AI that understands the "feel" of textures to better assist in product design and virtual reality.

Summary

The rise of the AI photo generator has democratized high-level visual production. By understanding the differences between artistic tools like Midjourney, logical tools like DALL-E 3, and integrated suites like Adobe Firefly, creators can choose the right engine for their specific needs. Mastering the art of the prompt and staying informed about the ethical implications of this technology are essential for anyone looking to navigate the future of digital media. As these tools continue to evolve, they will not replace the human artist but rather act as a high-powered catalyst for human creativity.

FAQ

What is the best AI photo generator for beginners?

DALL-E 3 is generally considered the best for beginners. Because it is integrated into the ChatGPT interface, you can use natural conversation to create and refine images without needing to learn complex parameters or technical commands.

Can I use AI-generated photos for commercial purposes?

It depends on the platform and your subscription level. Adobe Firefly is specifically designed for commercial safety. Midjourney and DALL-E 3 allow commercial use for paid subscribers, but you should always review the specific Terms of Service, as copyright laws vary by country.

Why do AI photo generators struggle with hands?

AI models don't have a 3D understanding of anatomy; they predict pixel patterns based on 2D images. Since hands have complex geometry and many parts that can overlap, the AI often gets confused by the varying perspectives and "guesses" the placement of fingers incorrectly.

Is there a free AI photo generator available?

Yes, several options exist. Microsoft Designer (formerly Bing Image Creator) uses DALL-E 3 for free. Adobe Firefly offers a limited number of free generative credits each month, and Stable Diffusion is free if you have the hardware to run it locally.

How can I make my AI photos look more realistic?

To increase realism, use specific photography terms in your prompts. Include details about lighting (e.g., "Rembrandt lighting"), camera gear (e.g., "Shot on Sony A7R IV"), and specific textures (e.g., "visible skin pores," "linen fabric texture"). Avoiding generic terms like "photorealistic" in favor of technical descriptions often produces better results.

-

Topic: AI-GENERATED PHOTOShttps://journalstar.in/wp-content/uploads/2025/06/202561167.pdf

-

Topic: The 12 Best AI Image Generators to Use in 2026https://glima.ai/blog/best-ai-image-generators/

-

Topic: 13 Best AI Image Generators to Try in 2026 (Free & Paid Tools)https://www.cyberlink.com/blog/trending-topics/3715/best-ai-image-generators?srsltid=AfmBOoq0d_Kj9BMsxxByAmbHZXFBeQmxEXkJLQ3vdZ5Co5rp0x6WGP_7